A future post-doc and I are re-planning

an experiment I discussed a couple of years ago and included in an unsuccessful grant proposal, designed to finally find out the real sequence specificity of the DNA uptake machinery. At that time I only proposed a little bit of sequencing, but sequencing keeps getting cheaper so this time we're going to do it right. This blog post is to help me get the numbers clear.

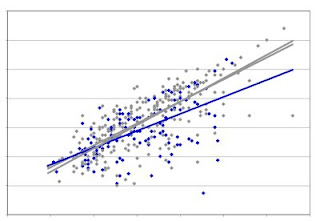

In my original plan we'd start with a pool of mutagenized (or degenerately synthesized) USS, either as short fragments (say 50bp) or inserts in a standard plasmid. The USS in the pool would have, at each position of the 30bp USS, a 91% probability of carrying the consensus base and a 3% probability of carrying any one of the 3 other bases. The flanking sequences might be mutagenized too, or the mutagenized fragment might have been ligated to a non-mutagenized tag or plasmid sequence. We'd give this DNA to competent cells, reisolate the DNA that had bound to the cells, and sequence it. We'd also sequence the 'input' pool. The differences between the 'input' and 'bound' pool sequences would define the specificity.

Only about 20 positions of the USS show a significant consensus, so in the analysis below I'm going to assume that the USS is 20bp long. On average each USS would have about 2 changes away from the consensus in these 2obp, but the range would be broad. Figuring out how broad is part of the reason for this post.

For example, what fraction of the fragments would have no differences from the consensus in the relevant 20bp? That's (0.91)^20, which Google says is about 0.15. What about fragments with 1 difference? I think that's about 20 * 0.09 * (0.91)^19, because there are 20 different possible locations for the difference. That's about 0.3. I fear the calculation will be more complicated for the larger numbers of differences. A similar calculation as the second one above gives 0.56 as the frequency of USSs with 2 mismatches, but that's unlikely to be correct because the sum of 0.15 + 0.3 +0.56 =1.01, leaving no possibility of USSs with more than two differences from the consensus. So for the analysis below I'll just use some fake numbers that I think are plausible: 0.1 with no difference, 0.25 with one difference, 0.3 with two differences, 0.2 with three differences, and 0.15 with four or more differences.

How is a sequence-specific uptake system likely to act on this variation? Let's assume that fragments with 'perfect' consensus USSs are taken up with probability 1. For the simplest version, let's assume all one-position differences have the same effect, reducing binding/uptake to 0.1, and all two-position differences reduce it to 0.01, etc. The 'bound' pool would then be expected to contain 0.78 perfects, 0.195 one-offs, 0.023 two-offs, 0.0015 three-offs and 0.0001 USSs with four or more differences.

How much sequencing of the 'bound' pool would we have to do to have included all of the three-off sequences (i.e. sequenced each version at least once)? Only one sequence in about 1000 will be a three-off, and there are about 7000 different ways to combine the positions of the changes (20*19*18). But there are three ways to be different at each position that's different... Yikes, I'm in over my head.

OK, let's do it for the one-offs first. There are 20 different locations for the difference, and three possible differences at each location, so that's 60 different versions. About 0.2 of all sequences will be in this class, so we will need to sequence a few thousand USSs to get reasonable coverage. What about the two-offs. There are 20*19=380 possible pairs of locations for the differences, and 9 possible differences for each pair of locations, so that's 3420 different sequences. Only about .023 of the fragments in the pool would have two differences, so we'd need to sequence about 150,000 USSs to get one-fold coverage, say about a million sequences to get good coverage. For the three-offs, the numbers are .0015, 6840, and 27, giving almost 200,000 different sequences, with about 1.5x10^8 sequences needed for one-fold coverage (say 10^9 for reasonable coverage).

If I instead assume that each mismatch reduces binding/uptake by only 0.5, then the 'bound' pool would have 0.3 perfects, 0.37 one-offs, 0.22 two-offs, 0.07 three-offs and 0.03 USS with four or more differences from the consensus. The corresponding numbers of fragments sequenced for reasonable coverage of one-offs, two-offs and three-offs would be about 1000, 100,000, and 10^8.

A more realistic model should have some positions more important that others, because that's the main thing we want to find out. What if differences at one of the positions reduces uptake to 0.1, and differences at the other 19 reduce it only to 0.5? We'd of course recover more of the differences in the less-important positions than of the differences in the important positions.

How does thinking about this change the amount of sequencing we'd need to do? If all we're interested in is the different degrees of importance of positions considered singly, then we'd easily see this by doing, say, enough to get 100-fold coverage of the important single mismatches. Even 100-fold coverage of the unimportant positions would be quite informative, as we only need enough to be confident that differences at the one 'important' position are underrepresented in our sequences. So 10,000 USS sequences from the 'bound' pool would be plenty to detect various degrees of underrepresentation of differences at the important positions.

But what if we also want to detect how differences at different positions interact? For example, what if having two differences beside each other is much worse for uptake than having two widely separated differences. Or if having differences at positions 3 and 17 is much worse than having differences at other combinations of positions? Or having A and C at positions 3 and 17 is much worse than having other non-consensus bases at those positions?

We would certainly need many more sequences to detect the scarcity of particular combinations of two or more differences. The big question is, how many? Consider just the two-offs. 100,000 sequences would let us get almost all of the non-important two-off variants at least once, and most of them about 5-10 times. But that wouldn't be enough to confidently conclude that the missing ones were not just due to chance -- we'd need at least 10 times that much sequence.

How much sequencing is that? If the fragments are 50bp, and we want, say, 10^6 of them, that's 5x10^7 bp of sequence. Future post-doc, that's a modest amount, right?

Given a sufficiently large data set, we do have access to software that would detect correlations between sequences at different positions (we used it for the correlation analysis in the USS manuscript).

Once we had identified candidates for significantly under-represented sequences, I wonder if there if a technique we could use to go back to the pool and confirm that these sequences were genuinely under-represented? Something analogous to validating microarray results with Q-PCR? Maybe the reverse? Ideally, we would have an oligo array with every possible two-off USS, and hybridize our bound pool to it. Probably not worth the trouble.

The other reason I'm writing this post is to figure out how much DNA we'd need to start with, in order to end up with enough sequences to define the specificity. If the 'bound' pool contained 1 microgram of 50bp fragments -- that would be 10^13 fragments. This should be enough to encompass way more diversity than we would ever be able to sequence. To get 1 microgram we'd need to start with an awful lot of cells, but even if we cut this down to 1 ng we'd still be fine.

The top graph shows how the cultures grew under the different treatments. BHI is the rich medium, and they grew nicely in it. Adding 1mM cAMP slowed growth down a little bit, which is not surprising,as cAMP is a powerful metabolic signal molecule. Transfering the cells to the starvation medium MIV stopped their growth, and even caused quite a drop in cfu/ml, but after a few hours they began to grow again. This could be because A. pleuropneumoniae differs from H. influenzae in being able to synthesize its own pyrimidines - we would need to check its genome.

The top graph shows how the cultures grew under the different treatments. BHI is the rich medium, and they grew nicely in it. Adding 1mM cAMP slowed growth down a little bit, which is not surprising,as cAMP is a powerful metabolic signal molecule. Transfering the cells to the starvation medium MIV stopped their growth, and even caused quite a drop in cfu/ml, but after a few hours they began to grow again. This could be because A. pleuropneumoniae differs from H. influenzae in being able to synthesize its own pyrimidines - we would need to check its genome.